Netflix architecture

Web Server

Always On Open Connection

Tradition Start thread for per incoming connection, blocking read write, exhaust memory, allocating 1000 stacks for 1000 stack, pin down cpu by context switching

Non Blocking Async IO

Read and write all callbacks for all open connections on single thread, scales much better

Application complicated, developer to keep track of all threads ,cant use thread stack as thread shared between connections , do that by event or state machine , Use Netty

Push Register

Store in Redis, Serialize Mapping

Data Store should have following char:

- Low Read Latency

- Record Expiry

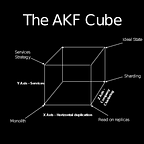

- Sharding

- Replication

Message Processing (Routing, Queue , Deliver)

Kafka — Message Broker , Decouple sender and reciever

Fire And Forget — Drop Push Message and forget , few care about result , Push delivery status queue or read from Hive table

Region Based Replication

Different queue for different priorities — Guarentees priority inversion will happen

Multiple Message processors

Metis — Message Processing System like flink, uses Mesos

Long lived stable connection

- Great for client efficiency

- Terrible for quick deploy , forcefully migrated allready connected clients

- Thundering herd — Large no of client trying to connect to same service at same time — Randomization helps

- Amazon LB runs as HTTP Load Balancer (Layer 7) can be configured to Layer 4 (TCP Load Balancer)

- More Number of smaller server s >>> few BIG Servers

- Auto scale on number of open connections

Push Messages

- On Demand Diagnostics (Push Message)

- Remote Recovery

- User Message

All Communication use JSON , ProtoBuf

Protobuf faster then JSON for int or double encoding /decoding

Deduplication happens on client